In the first post of this series on PyQt

Using PyQt with QtAgg in Jupyterlab – I – a first simple example

we have studied how to set up a PyQt application in a Jupyterlab notebook. The key to getting a seamless integration was to invoke the QtAgg-backend of Matplotlib. Otherwise we did not need to use any of Matplolib’s functionality. For our first PyQt test application we just used multiple nested Qt-widgets in a QMainWindow to create a simple, but interactive and instructive application in a Qt-window on the desktop.

So, PyQt works well with QtAgg and IPython. We just construct and show a QMainWindow; we need no explicit exec() command. An advantage of using PyQt is that we get moveable, resizable windows on our Linux desktop, outside the browser-bound Jupyterlab environment. Furthermore, PyQt offers a lot of widgets to build a full fledged graphical application interface with Python code.

But our funny PyQt example application still blocked the execution of code in other notebook cells! It just demonstrated why we need background threads when working with Jupyterlab and long running code segments. This would in particular be helpful when working with Machine Learning [ML] algorithms. Would it not be nice to put a task like the training of an ML algorithm into the background? And to redirect the intermediate output after training epochs into a textbox in a desktop window? While we work with other code in the same notebook?

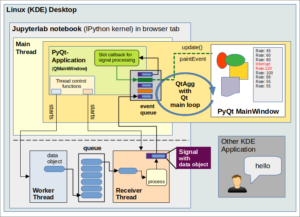

The utilization of background threads is one of the main objectives of this post series. In the end we want to see a PyQt application (also hosting e.g. a Matplotlib figure canvas) which displays data that we created in a ML background job. All controlled from a Jupyterlab Python notebook.

To achieve this goal we need a general strategy how to split work between foreground and background tasks. The left graphics indicates in which direction we will move.

But first we need a toolbox and an overview over possible restrictions regarding Python threads and PyQt GUI widgets. In this post we, therefore, will look at relevant topics like concurrency limitations due to the Python GIL, thread support in Qt, Qt’s approach to inter-thread communication, signals and once again Qt’s main event loop. I will also discuss some obstacles which we have to overcome. All of this will give us sufficient knowledge to understand a concrete pattern for a workload distribution which I will present in the next post.

Level of this post: Advanced. Some general experience with asynchronous programming is helpful. But also beginners have a chance. For a ML-project I myself had to learn on rather short terms how one can handle thread interaction with Qt. In the last section of this post you will find links to comprehensive and helpful articles on the Internet. Regarding signals I especially recommend [1.1]. Regarding threads and the helpful effects of event loops for inter-thread communication I recommend to read [3.1] and [3.2] (!) in particular. Regarding the difference between signals and events I found the discussions in [2.1] helpful.

Jupyterlab – blocked code cells and freezing GUIs

A Python notebook in Jupyterlab is a typical blocking application. A cell that executes long running code, e.g. in a loop, occupies the Python interpreter and blocks it from executing (shorter) code in other cells. Long running code may also render GUI applications, which you may have started already, unresponsive or “frozen”. This could e.g. be a Qt-window with Matplotlib [MPL] figures, textboxes and control elements. The IPython kernel must split available CPU time between handling keyboard input to notebook cells, Python code interpretation and interaction with external loops.

Any interactive GUI-application – like a Qt application – depends on a loop that waits and checks periodically for events on the GUI-interface and dispatches information about certain events to dedicated event handling functions. Such a “main event loop” for a graphical (PyQt) application started from your notebook competes with other loops as e.g. loops handling keyboard input for Juypterlab notebook cells.

Thus, for a combination of Jupyterlab (IPython), Python code and graphical (Py)Qt windows there are at least three blocking effects we must consider:

- The editing of cells (very similar to entering commands at a “prompt”) could block the central event loops of already started GUI applications – and vice versa.

- Any long lasting Python code running in one notebook cell will prevent the execution of code prepared in some other cell. In addition it will make GUI applications unresponsive as their event loop and Python-based event handler functions cannot be executed.

- Any long running Python code in a GUI-callback, which was invoked to handle events, may block the GUI-loop for other application windows AND the running of code in other notebook cells.

Note that running code in one cell does not block pure editing in another notebook cell. IPython knows how to delegate CPU time to interpreting Python code and command editing. The crucial points are other (external) loops, as GUI event loops, and long running Python command sequences.

With respect to Qt the first point in the list above has been solved by QtAgg for us: Qt’s main event loop is intermittently executed when the main IPython loop for handling input runs idle. This happens via utilizing a hook mechanism. See another post series on Matplotlib and QtAgg for more details. Or see [7.1] and the code for QtAgg [7.2].

The other two other points can, however, become a real nuisance for Jupyterlab users. Point 2 occurs e.g. when we have started a long ML training run or when we apply an ML algorithm to many data objects. The third point reminds us about our own responsibility that we should not perform long lasting calculations or data processing in event handlers of a GUI loop.

A typical solution to overcome point 2 seem to be threads. By moving the code execution of one cell to a background thread we want to become able to run at least short code sequences in other cells in parallel to the background job. Or to start further background jobs from other cells. I addition the GUI main loop would intermittently run in situations where no keyboard input to notebook cells must be handled. In addition to solving blocking effects threads could in principle also raise performance on a CPU with multiple cores. But is the latter possible with Python at all?

Threads, concurrency limitations due to the GIL

Threads are a useful tool to put some work into the background of an application which runs some task in the foreground and is blocking other activities there (as IPython).

Without going into details: A thread is like a parallel, independent space of command execution. So, using threads is about “concurrency“. Hardcore Linux users are accustomed to putting jobs in the background of a command shell all the time. And they know: On a multi-core CPU or a multi-CPU Linux system we can leave it to the OS where it wants to run a thread – on the same CPU core as the main application or on another one. The Linux scheduler does this work and the necessary context switching for us. But what about Python?

Unfortunately, there are strict concurrency limitations when a Python interpreter with its low-level locking mechanisms [GIL] is involved. See [4.1] and the discussions in [4.3]. In [3.1] we read:

“In Python’s C implementation, also known as CPython, threads don’t run in parallel. CPython has a global interpreter lock (GIL), which is a lock that basically allows only one Python thread to run at a time. This can negatively affect the performance of threaded Python applications because of the overhead that results from the context switching between threads. However, multithreading in Python can help you solve the problem of freezing or unresponsive applications while processing long-running tasks.”

Well, the latter is one of our main objectives. I quote from [3.3]:

“Threads share the same memory space, so are quick to start up and consume minimal resources. The shared memory makes it trivial to pass data between threads, however reading/writing memory from different threads can lead to race conditions or segfaults. In a Python GUI there is the added issue that multiple threads are bound by the same Global Interpreter Lock (GIL) — meaning non-GIL-releasing Python code can only execute in one thread at a time. However, this is not a major issue with PyQt where most of the time is spent outside of Python.“

So, when using Python threads (or Qt’s QThreads as a special variant; see below) you are always running up against the GIL. Using a thread will not lead to much, if not any performance improvement as there is no parallel execution. The total turnaround time can even become longer. Among other things your performance with threads will depend on the right amount of data handling, of data transfer and the amount of GIL locking code in each thread and of course the timing of thread interactions. There are limits. See also the caveats section of [3.3].

But the GIL will sometimes release control when libraries written in C/C++ are invoked. This is the case, e.g. for I/O operations [3.6] and also for underlying C/C++-modules of PyQt (which wraps a lot of the original Qt objects). If e.g. I/O is dominant than threading can lead to performance improvement.

Another good news is that many Numpy array functions, which we heavily use in connection with ML-tasks, release the GIL; see [4.6]. However, when you look into your Linux installation of Numpy via np.show_config() and np.show_runtime() you will probably find that it runs array and linear algebra functionality already on multiple threads with the help of libopenblas, namely as much as you allow via setting your shell environment with e.g. “export OPENBLAS_NUM_THREADS=4” and “export OMP_NUM_THREADS=4”. Then you will not get any performance improvement with extra threading. See [4.8]. You may even loose performance. But the topic Numpy and the GIL on a Linux machine is something for a separate post.

Anyway, threading in our case is not done because of performance improvement. But it will hopefully reduce code blocking effects in Jupyterlab notebooks and freezing Qt GUIs started from such notebooks. Still, the discussion includes a warning about our ultimate objective regarding Python controlled ML jobs in the background:

When we perform long running ML-calculations and trainings how much time is spent in GIL-locking Python code? We hope that this is only a small amount and that in particular code running on the GPU does not block anything. In addition we should nest Numpy functions to avoid But we will have to test this.

For resource intensive Python applications, where performance is important, you should use the Multiprocessing Module [3.6]. It uses real Python processes with independent Python interpreters. But this makes data exchange a bit more problematic. We are not going to dig deeper into this alternative in this post series.

What about general thread-safety?

Well, when you spawn Python threads or QThreads from a main thread the addresses of your Python objects are still available and usable to these threads. Threads share the same memory space (see e.g. [3.3] and [3.6]). Python objects may get a thread affinity [3.1], but they can be accessed from other threads.

Therefore, you could directly call methods of a widget object, which resides in the main thread, from another thread, but this is not (at all) a thread-safe procedure:

Race conditions and conflicting operations as well variable changes with unclear outcome may occur.

Decisive variables of a thread (e.g. of the main thread) must in general be protected against unserialized changes from other threads. Otherwise the reactions to these variable changes may become unpredictable or conflicting. Think of a bank account from which two persons try to withdraw money in parallel. If the available money is not big enough for both requests you may need to block one request. I.e. you need to serialize the requests and invoke some controlling regulation in between. Similar situations may impact variables that control the status of widgets or other objects. As we shall see in a second, PyQt-GUI-widgets are not even reentrant [3.1].

Note that the GIL with its low micro-level of control and sequential execution of Python commands across threads is no help with this type of problem. The GIL does not prevent problems with the logical sequence in the execution order of commands when we jump from thread to thread and manipulate one and the same central or global variable. Examples are given in [4.4], [4.5].

Therefore, we basically need thread-safe methods of inter-thread communication and thread interaction. In particular Qt widgets and also Matplotlib require them – and a logical serialization of change and update requests. But there is another obstacle, too.

Two major obstacles

There are two severe obstacles we must get control over:

- The handling of GUI events and the updates of GUI widgets only work (correctly) in the main thread that started our QApplication and the event loop. More details follow below. This is not specific of Qt, but applies to other GUI frameworks, too.

- Qt GUI-objects, in particular QWidgets, are neither reentrant nor thread safe. In addition Matplotlib’s functionality is not thread-safe, either.

I made the experience that Qt widgets and Matplotlib figures are neither thread-safe nor working correctly if “controlled” from the background the hard way. For example, important information about a widget’s status may go to the wrong thread:

A PyQt’s QTextEdit widget can be directly fed with more and more text from the background. In my case at some point the widget’s fixed height was no longer sufficient; a scrollbar had to be shown and adapted to the growing text length. And actually scrolling with the mouse in the foreground seemed to work perfectly as long as the background job ran. However, when it stopped and tried to I scroll again with the mouse the IPython kernel crashed. Because important information about the widget and its status bar had suddenly gone to the nirvana.

Regarding Matplotlib, directly enforcing the drawing of ax-axes with tick-marks by calling respective Matplotlib commands in parallel from different threads will also lead to severe errors.

All in all I should have taken warnings in the literature more seriously. In [3.4] we find the following about main and secondary (background) threads:

“As mentioned, each program has one thread when it is started. This thread is called the “main thread” (also known as the “GUI thread” in Qt applications). The Qt GUI must run in this thread. All widgets and several related classes, for example QPixmap, don’t work in secondary threads. A secondary thread is commonly referred to as a “worker thread” because it is used to offload processing work from the main thread.”

(Highlighting done by me.) And I directly quote from the Qt documentation – see [3.5]:

“Although QObject is reentrant, the GUI classes, notably QWidget and all its subclasses, are not reentrant. They can only be used from the main thread. As noted earlier, QCoreApplication::exec() must also be called from that thread.”

The exec()-command starts the (main) event loop in the main thread. Fortunately, QtAgg has done this for us already.

Similar warnings exist about Matplotlib; see [6].

Summary: Both our PyQt GUI objects and all user interaction with its QWidget-based elements are bound to the main thread. This is also true for Matplotlib figures and their functionality. Threads spawned from the main thread are not suited to perform any direct interaction with Qt-widgets or to directly call their methods, update them or handle events occurring to them. Neither should we try to call Matplotlib functionality directly from background threads.

Both of the above obstacles force us to split our work in an intelligent way between the main thread and spawned threads. And we need proper means for inter-thread communication and some data exchange between threads:

The widgets in the main thread must be informed when we have prepared data in the background which should be used for updates of the graphics in a Qt-window. An understanding of how we can solve these problems with the help of Qt requires a consideration of synchronous vs. asynchronous execution of callbacks.

Signals/slots as a mechanism for inter-thread communication in Qt

Qt provides its signal/slot mechanism for a communication between all QObjects – whether residing in the same thread or in different threads.

Signals notify receiver objects about something that occurred at a sender object. Signals can carry information, i.e. data, with them. They are sometimes emitted automatically by certain (sender) objects when status changes occur. But you as a programmer can also emit signals on purpose via your Python code, e.g. via code for specific functions of objects residing in a background thread. Signals can be defined as static member variables of a Python class. We can even provide information regarding the (Python) type of the data to be carried with a signal.

Slots on the other side are simply member functions of receiver objects that react to a signal – right in the sense of a callback. Note that in contrast to event handler functions I did not mention the involvement of any event loop here. Actually, a receiver object’s method, which has been bound to a signal, is executed directly and synchronously after the signal has been emitted within the same thread. No event loop interferes in such a case.

Many Qt widgets offer standard signals which are automatically emitted and which can easily be connected to slots, i.e. member functions of an object or widget; for examples see [5.1] and [5.4]). Actually, we have used this variant of the signal/slot-mechanism already in the example I discussed in my first post. There, we explicitly bound status changing clicks of buttons to various member functions of a QMainApplication instance. This is an example of using a signal in connection with multiple slots. More details follow below. And see [1.1] for examples and more explanation.

So far we have spoken about sender and receiver objects which reside in the very same thread. However, the emitter of a signal can be a associated with another thread than the receiver object. The objects’ thread affinities (see below) decide about it:

When the thread that emits a signal is different from the thread where it is received the signal transfer is changed from synchronous to asynchronous.

Actually, when transferred between different threads signals normally end up as events in the event queue of the receiving thread. This is at least the default! The signals are then handled by the receiving thread’s own event loop. This mechanism, properly used, will thus give us thread-safety by serializing the execution of widget-update requests in the main thread of our PyQt application. See below.

Note that Qt’s signal/slot mechanism abstracts from underlying OS preferences for inter-process communication.

Signals/slots vs. events

The attentive reader may ask now: What are the differences between signals and events? If a signal apparently can be transformed into an event, is there a difference at all? Are slots nothing else than callbacks for events? Spontaneous answer: Signals and events are not the same.

In my understanding events happen to your Qt-application (at least as you do not explicitly send them by your code). Often events occur OS-based and hardware related (low-level). They form a finite set. Qt e.g. encapsulates X-window events on a Linux system and turns them into Qt-events. The Qt-application has to know about an event and must handle it somehow – or explicitly ignore it. The standard way of achieving this is to define a handler method for a given event type.

A event handler method must get a specific name. The user defines a concrete series of commands for a (thereby overloaded) virtual function whose name refers to the event type (e.g. mouseMoveEvent() or paintEvent() ). A response is sent for accepting or ignoring the event. (We saw this for the closeEvent in our first example). Events are represented in Qt by QEvent objects which also load information about what has happened when and where. Actually, one can also construct custom event objects and send or post them. See [2.4]. So, they not always happen due to an external action.

But a key point about events is: Event handling should be and is normally done in a serialized asynchronous way – one event after the other. Here the event queue and loops for event handling come into the game. Putting an event into a queue defers its handling. Regarding GUI classes in particular the main event loop (e.g. of the main thread). Each thread can have its own event loop, but only the event loop in the main thread is usable for widget interaction – OS events and paintEvents; for event loops in threads see below. Anyway: An event loop and its dispatcher are the true receivers of an event.

In the first post of this series we just spoke about standard events occurring due to e.g. user interactions with widgets. These events were explicitly and sometimes “automatically” connected to callbacks in our test example used in the first post – if and when we used the right naming of the callback functions.

Signals instead are typically freely defined by your logic implemented for a widget (high-level). Signals are emitted and some other objects or widgets are notified about it and react to it. For this purpose the receiver objects have so called slots which in principle are just member functions. Such slots can be connected to signals. However, in contrast to events, one can explicitly connect many signals to one slot and vice versa a signal to many slots. In the latter case a signal is emitted multiple times. So, we have a multi-sender and multi-receiver model. Even threads themselves can send and receive signals [3.1].

Normally, i.e. when sender and receiver widgets of a signal reside in the same thread or when sender or receiver thread are identical the slot function of a widget is called directly and not deferred. E.g., widgets in the main thread with slot functions connected to signals are “notified” directly if the sender is on the same main thread as the receiver object. The main event loop is not involved. This means that the callback behind a slot is executed directly, i.e. synchronously. Slots are a true implementations of the callback technique. Further code in the sender has to wait executing until the receiver callback returns. So there is no accepting or ignoring an event on the receiver’s side.

I quote from the documentation at [1.2] referring to sender and receiver in the same thread :

“When a signal is emitted, the slots connected to it are usually executed immediately, just like a normal function call. When this happens, the signals and slots mechanism is totally independent of any GUI event loop. “

However, to make the confusion complete: Signals and events can be in a way transformed into each other. See the discussions in [7.1]. The transformation of a signal into an event is important for thread-communication. If the sender is on a different thread the signal is (normally) changed into an event. This enforces deference, serialization and dispatching by the event loop.

Threads, signals and inter-thread communication

Qt supports threads. Threads can be created and later started by setting up QThread objects in PyQt. You should always be aware of the fact that a QThread object is not the same as thread itself; it is just a convenient control object around a thread (see [3.2]). This control object lives in the thread where it was created. The real threads can be started by a start() method of the QThread objects. Note also that QThread-based threads are just well equipped Python threads in the end, well integrated with Qt-object interaction.

QObjects can be moved to threads by a method moveToThread(). Thereby these objects get a thread affinity. We can explicitly connect the “started”-signal of a thread to a method of an object in the thread. Thus starting this method together with the thread. The object afterward can emit its own signals from its methods.

A thread itself can also get a special method run() (overwriting a virtual function), which is executed, when the thread is started. This is the code location where we would start a threads own event loop via exec(). As we will see in a second such an event loop would help to handle events which were created from signals other threads sent to slots in this thread.

Note: A Qt-thread (represented by a QThread object to your Qt application) has a defined set of signals (e.g. “started”, “finished”) which are automatically emitted). QThread-controlled threads also have a series of standard slots, which we can fill with life.

Inter-thread communication

In Qt inter-thread communication can be done with signals. As mentioned already, Qt evaluates whether the thread from which a signal is emitted is different from the thread in which a signal is received. This normally (!) decides upon how a signal is handled: directly/synchronously or asynchronously. As a standard, the communication between different threads happens asynchronously and through the event loop (!) of the target thread.

Each QThread can create its own event loop, which allows to use the signal/slot mechanism between objects in different threads or directly between different threads. [3.5] tells us:

“An event loop in a thread makes it possible for the thread to use certain non-GUI Qt classes that require the presence of an event loop (such as QTimer, QTcpSocket, and QProcess). It also makes it possible to connect signals from any threads to slots of a specific thread.“

As we have seen it possible to use background worker threads for computational tasks, but updates of GUI-widgets must be done in the main thread. For reasons of thread safety this, however, requires serialization. Regarding events serialization is provided by the main event loop.

To combine signals with the event loop in a target thread requires that signals from another thread are transformed into events – which technically is possible. Whether a signal is placed as a kind of special event into the event loop of a target thread can actually be defined in your code. You can provide a “type” parameter when you define a signal/slot-connection; see [5.5]. Types are

“Qt.AutoConnection (standard), Qt.DirectConnection, Qt.QueuedConnection, Qt.BlockingQueuedConnection”

See [5.1], [5.2] and [5.6]. The default is the following: If the sending thread, which contains an affine signal emitting object, and the (receiving) thread, which contains object that has a fitting slot, are different, then the signal moves as an event into the receiving threads’ event queue and is dispatched by the respective event loop. We speak of a “queued connection” between emitter and receiver objects.

A queued connection can explicitly be set by the type Qt.QueuedConnection. Qt.AutoConnection defaults to Qt.QueuedConnection if the sending and the receiving thread differ. See [5.1] and [5.2] for more information.

[5.2] also elaborates on the role of objects in different threads and threads themselves as signal emitters. [5.2] and [5.4] underline that there are two methods to make a communication with from a background thread to GUIwidgets in the main thread safe: Either by posting custom events in the event queue of the main thread or by using signals with the (default) flag Qt.QueuedConnection. In the examples later given in this series we will work with signals, only.

Note that due to the asynchronous handling in a QueuedConnection signal emission is non-blocking for code following the emit()-command in an object of the sender thread. This would be different for the BlockingQueuedConnection.

Thread safety by using the event queue in target threads

Now, if you think this all is complicated, you are right. However, changing signals to events and placing them in the event queue for dispatching by the receiving thread’s event loop is a clever move, in particular with respect to the GUI-objects/widgets in the main thread:

QWidgets along with other GUI classes are neither reentrant nor thread-safe. Making use of the event loop in the main thread enforces a serialization of the reaction to signals coming in from various other threads – most often ending in update-requests to GUI-widgets and related paintEvents. So, these requests line up in order with other update requests issued in the main thread or other background threads for the same widgets.

The whole trick brings thread-safety with it.

Closing threads and applications

One should not close an application while threads started from it are still working! We need special signals and functions [deleteLater()] to stop a thread and eliminate its objects before a PyQt application is actually closed. I will discuss this via giving examples in forthcoming posts of this series.

Threadpools

As opening and closing threads costs overhead time, Qt offers the option to use thread pools. This is interesting when we can split background jobs such that they deal with batches. I will come back to this option in a later post.

Summary

(Py)Qt provides everything we need to work with threads and inter-thread communication. We can now build a safe strategy for shifting workload to the background of a Jupterlab notebook. This will help us in particular to trigger updates of PyQt-widgets in the main-thread with commands from other threads. By keeping the execution time of callbacks in the main thread small, we can hope for both a responsive Jupyter notebook and other started graphical applications while the background jobs do their work. We can and must trust in the scheduler of the OS to switch often enough between the threads such that all threads receive a due part of the GIL blocked Python time on the CPU. In the next post

Using PyQt with QtAgg in Jupyterlab – III – a simple pattern for background threads

I will discuss a pattern of workload distribution between the main thread with its GUI elements and two worker threads.

Links and literature

[1] Signals and slots

[1.1] https://www.pythonguis.com/tutorials/pyqt-signals-slots-events/

[1.2] https://doc.qt.io/qtforpython-6/overviews/signalsandslots.html

[1.3] https://www.pythontutorial.net/pyqt/pyqt-signals-slots/

[1.4] https://doc.qt.io/qtforpython-6/tutorials/basictutorial/signals_and_slots.html

[1.5] https://doc.qt.io/qtforpython-5/PySide2/QtCore/Signal.html

[1.6] https://doc.qt.io/qt-6/qt.html#ConnectionType-enum

[1.7] For custom signals see: https://docs.huihoo.com/pyqt/PyQt5/signals_slots.html

[2] Difference between “signal/slot” and “events/event handler”

[2.1] https://stackoverflow.com/questions/3794649/qt-events-and-signal-slots (!! very interesting and informative discussions)

[2.2] https://forum.qt.io/topic/117441/events-vs-signal-slot-system/7

[2.3] https://stackoverflow.com/questions/9323888/what-are-the-differences-between-event-and-signal-in-qt

[2.4] Events: https://doc.qt.io/qtforpython-5/overviews/eventsandfilters.html

[3] Threads

[3.1] https://realpython.com/python-pyqt-qthread/

[3.2] https://wiki.qt.io/Threads_Events_QObjects

[3.3] https://www.pythonguis.com/tutorials/multithreading-pyqt-applications-qthreadpool/

[3.4] https://het.as.utexas.edu/HET/Software/html/thread-basics.html

[3.5] https://doc.qt.io/qt-5/threads-qobject.html and https://doc.qt.io/qt-6/threads-qobject.html

[3.6] https://www.toptal.com/python/beginners-guide-to-concurrency-and-parallelism-in-python

[4] Threads and the Python GIL

[4.1] https://docs.python.org/3/library/threading.html

[4.2] https://realpython.com/python-pyqt-qthread/

[4.3] https://stackoverflow.com/questions/1595649/threading-in-a-pyqt-application-use-qt-threads-or-python-threads

[4.4] https://stackoverflow.com/questions/52507601/whats-the-point-of-multithreading-in-python-if-the-gil-exists

[4.5] https://stackoverflow.com/questions/40072873/why-do-we-need-locks-for-threads-if-we-have-gil

[4.6] Numpy and the GIL: https://superfastpython.com/numpy-vs-gil/

[4.7] https://medium.com/road-to-full-stack-data-science/escape-pythons-gil-with-numpy-2f6076bfa2e6

[4.8] https://realpython.com/python-parallel-processing/

[5] Threads and signals

[5.1] https://doc.qt.io/qt-6/threads-qobject.html#signals-and-slots-across-threads

[5.2] https://wiki.qt.io/Threads_Events_QObjects

[5.3] https://wiki.python.org/moin/PyQt5/Threading%2C_Signals_and_Slots

[5.4] https://realpython.com/python-pyqt-qthread/

[5.5] https://nikolak.com/pyqt-threading-tutorial/

[5.6] https://doc.qt.io/qtforpython-6/tutorials/basictutorial/signals_and_slots.html

[6] Matplotlib is not thread safe

[6.1] https://matplotlib.org/3.8.2/users/faq.html#work-with-threads

[6.2] https://stackoverflow.com/questions/34764535/why-cant-matplotlib-plot-in-a-different-thread

[6.3] https://matplotlib.org/stable/users/explain/figure/interactive_guide.html

[7] Matplotlib’s Qt event loop integration with IPython

[7.1] https://matplotlib.org/stable/users/explain/figure/interactive_guide.html

[7.2] https://github.com/matplotlib/matplotlib/blob/v3.8.2/lib/matplotlib/backends/backend_qt.py